This Expert is Looking to Solve the Technology Ethics Equation

Life or death decisions don’t come around very often — unless you’re a doctor. Even the routine choice of whether or not to order another test could mean the difference between detecting a cancerous tumor early or letting it spread. During the COVID-19 pandemic, doctors were forced to decide which patients should get access to ventilators in short supply. Big or small, decisions like these require ethical considerations that are rarely simple. Now, with the rapid ascent of AI in medicine, an urgent question has emerged: Can AI systems make ethical medical decisions? And even if they can, should they?

Today, most AI systems are powered by machine learning, where data-hungry algorithms automatically learn patterns from the information they’re trained on. When new data is input into the algorithms, they output a decision based on what they’ve gleaned. But knowing how they arrived at their decision can be challenging when all that lies between their input and output is a dizzying array of opaque, uninterpretable computations.

To find out the current state of AI’s ethical decision-making in medicine, Discover spoke with Brent Mittelstadt, a philosopher specializing in AI and data ethics at the Oxford Internet Institute, U.K.

Q: First of all, is it possible to know exactly how an algorithm makes a medical decision?

BM: Yes, but it depends on the type of algorithm and its complexity. For very simple algorithms, we can know the entire logic of the decision-making process. For the more complex ones, you could potentially be working with … hundreds of thousands or millions of decision-making rules. So, can you map how the decision is made? Absolutely. That’s one of the great things about algorithms: You [often] can see how these things are making decisions. The problem, of course, is how do you then turn that into something that makes sense to a human? Because we can typically hold between five to nine things in our mind at any given time when we’re making a decision, whereas the algorithm can have millions and can have interdependencies between them. … It’s a very difficult challenge. And you tend to have a tradeoff between how much of the algorithm you can explain and how true that explanation actually is, in terms of capturing the actual behavior of the system.

Q: Is that an ethical problem in itself — that we may not understand so much of how they’re making these decisions?

BM: Yeah, I think it can be. I have a belief that if a life-changing decision is being made about you, then you should have access to an explanation of that decision, or at least, some justification, some reasons for why that decision was taken. Otherwise, you risk having a Kafka-esque system where you have no idea why things are happening to you. … You can’t question it, and you can’t get more information about it.

Maybe you have a system that is helping the physician reach a diagnosis, and it’s giving you a recommendation, saying, “I have 86 percent confidence that it’s COVID-19.” In that case, if the physician cannot have a meaningful dialogue with the system to understand how it came to that recommendation, then you run into a problem. The physician is trusted by the patient to act in their best interest, but the doctor would be making decisions on the basis of trust that the system is as accurate or as safe as is claimed by the manufacturer.

Q: Can you give an example of a type of ethical decision that an AI system could make for a doctor?

BM: I would hesitate to say that there would be an AI system that is directly making an ethical decision of any sort, for a number of reasons. One is that to talk of an automated system engaging in ethical reasoning is strange. And I don’t think it’s possible. Also, doctors would be very hesitant to turn over their decision-making power to a system entirely. Taking a recommendation from a system is one thing; following that recommendation blindly is something completely different.

Q: Why do you think it’s impossible for AI to engage in ethical reasoning?

BM: I suppose the easiest way to put it is that, at the end of the day, these are automated systems that follow a set of rules. And those can be rules about how they learn about things, or they can be decision-making rules that are preprogrammed into them. So, let’s say that you and I have agreed through discussion that … in your actions, you should produce the greatest possible benefit for the greatest number of people. What we can then do is design a system that follows that theory. So [that system] is, through all of its learning, through all of its decision-making, trying to produce the greatest possible benefit for the greatest number of people. You wouldn’t say that that system, in applying that rule, is engaging in any sort of ethical reasoning, or creating ethical theory, or doing the thing that we would call ethical decision-making in humans — where you’re able to offer reasons and evidence and have a discussion over what is normatively right.

But you can make a system that looks like it’s making ethical decisions. There was a good example recently. It was essentially a system where it had a bunch of decision-making rules that were defined by the wisdom of the crowd. And people immediately started testing it, trying to break it and figure out what its internal rules were. And they figured out that, as long as you end the sentence with [a phrase like] “produces the greatest possible happiness for all people,” then the system would tell you whatever you’re proposing is completely ethical. It could be genocide, and as long as [it was worded to suggest that] genocide creates the greatest happiness for the greatest number of people the system would say, yes, that’s ethical, go ahead and do it. So, these systems are, at least when it comes to ethical reasoning, incapable of it. They’re ethically stupid. It’s not something that they can actually engage in, because you’re not talking about just objective facts. You’re talking about norms and subjectivity.

Q: If AI systems can’t engage in authentic ethical reasoning on their own, are there other approaches being used to teach them what to do with an ethical component of a decision?

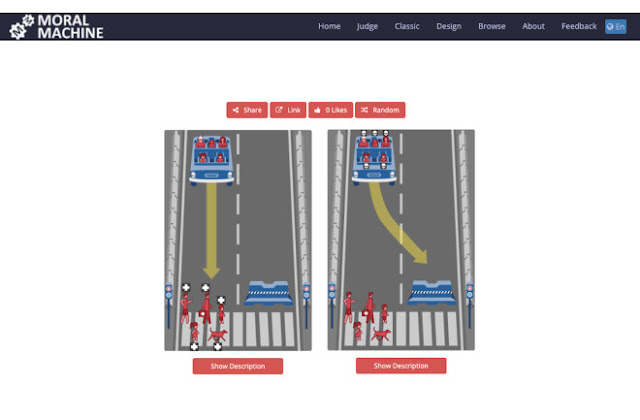

BM: There was the Moral Machine experiment. So that was a very large global study out of MIT, where they built this website and a data-collection tool that was based around the Trolley Problem thought experiment. And the idea was that you’d get people from all over the world to go through several scenarios about which lane an autonomous car should pick. In one lane, you might have an elderly woman and a baby. In the other lane, you’d have a dog.… You could collect a bunch of data that told you something about the moral preferences or the ethics of people in general.

But the key thing here is the approach taken was essentially [creating] moral decision-making rules for robots or for autonomous cars through the wisdom of the crowd, through a majority-rules approach. And that, to me, is perverse when it comes to ethics. Because ethics is not just whatever the majority thinks is right. You are supposed to justify your reasons with evidence or rationality or just reasoning. There’s lots of different things that can make you accept an ethical argument. But the point is, it’s about the argumentation and the evidence — not just the number of people that believe something.

Q: What other ethical concerns do you have about AI being used in medical decision-making?

BM: In medicine, it’s a well-known fact that there are very significant data gaps, in the sense that much of medicine historically has been developed around the idea of a white male body. And you have worse or less data for other people — for women, for people of color, for any sort of ethnic minority or minority group in society. … at is a problem that has faced medical research and medical decision-making for a long time. And if we’re training our medical [AI] systems with historical data from medicine — which of course we’re going to do, because that’s the data we have — the systems will pick up those biases and can exacerbate those biases or create new biases. So that is very, very clearly an ethical problem. But that is not about the system engaging in ethical reasoning and saying, “You know what, I want to perform worse for women than I do for men.” It’s more that we have bias in society, and the system is picking it up, inevitably replicating and exacerbating it.

If we engage in this sort of thinking, where we think of these systems as magic or as objective, because they’re expensive, or they’re trained on the best data, [or] they don’t have that human bias built into them — all of that is nonsense. They’re learning from us. And we have created a biased world and an inequal world.